Domain adapting LLMs

Suppose you have a general-purpose LLM and a target domain — say, biomedical literature. The standard playbook is straightforward: collect domain text, keep training the model, watch the loss go down, ship it. This workflow is intuitive and widespread. It also has a quiet failure mode that most practitioners never notice until something downstream breaks.

Over the past two years, I have been running experiments on domain-adaptive and continual pretraining across a wide range of models, domains, and training setups. This post synthesizes what I found — specifically, three things that surprised me: why the metric everyone uses is broken, how to fix it, and what continual pretraining actually does to a model compared to standalone adaptation.

1. The metric you’re trusting is broken

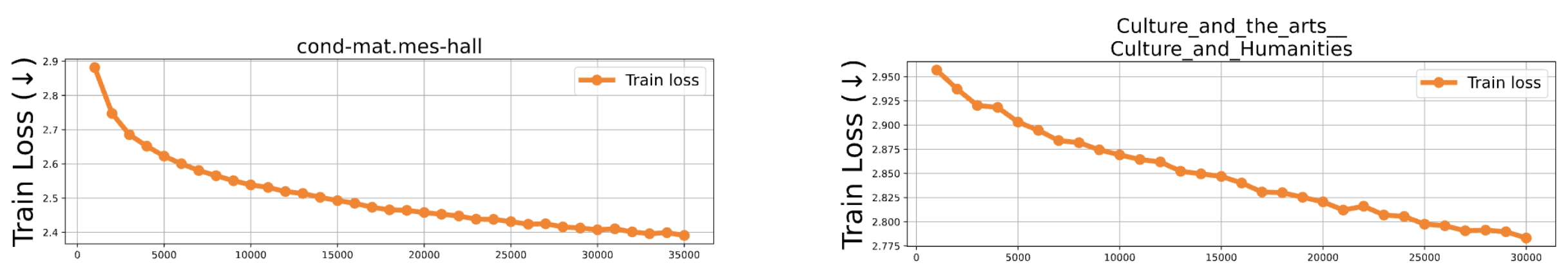

Let’s start with a concrete experiment. Take GPT-2 and continue pretraining it on two groups of domains: Wikipedia articles (History, Culture, Technology, …) and scientific papers from Semantic Scholar (Physics, Mathematics, Astrophysics, …). In both cases, the training loss goes down — the model is learning, nothing looks wrong.

Now look at the validation and test perplexity — the number everyone reports. For the scientific papers domain, it improves after adaptation, as expected. For the Wikipedia domain, it gets worse. The model was explicitly trained on the target domain, the training loss dropped, and yet the held-out test perplexity deteriorated.

Why? The key is distributional similarity. Wikipedia-style text is already well-represented in GPT-2’s pretraining corpus (OpenWebText) and likewise that of other LLMs. When you continue training on data that closely resembles what the model has already seen, you are not teaching it something new but nudging it toward a slightly different local optimum in a part of the loss landscape it has already visited. The model overfits to the narrow distribution of your adaptation set at the expense of the broader representation it previously had. The scientific paper domains are genuinely out-of-distribution. There the model has something real to learn, and the perplexity improvement reflects that.

But there is a deeper problem. Even when perplexity improves, it may not be telling you what you think it is.

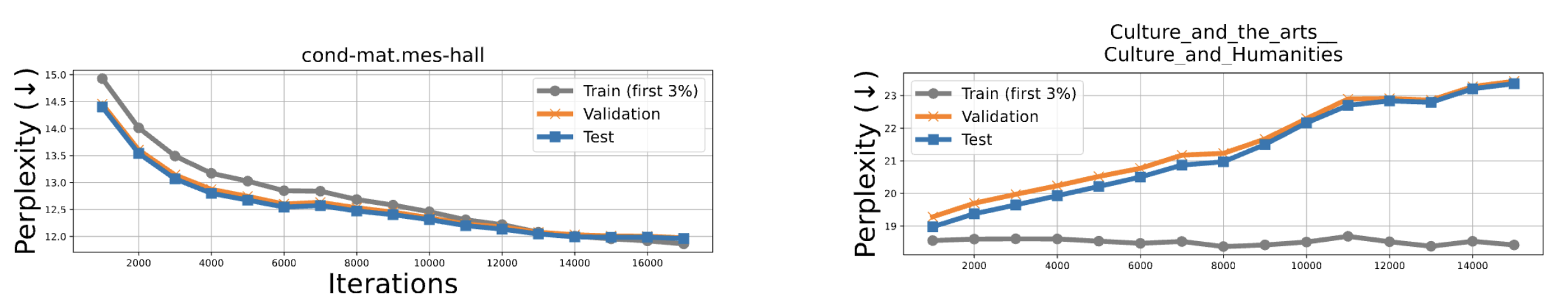

When you break perplexity down to the token level, the degradation on Wikipedia domains is not spread evenly across the vocabulary. It is concentrated in a small number of domain-irrelevant tokens — newline characters, punctuation, generic function words. These tokens appear frequently in any text, so they dominate the aggregate perplexity. A model could be learning your domain vocabulary perfectly and still show degraded perplexity if its predictions for \n and . have shifted slightly.

Perplexity is an average over all tokens, domain-relevant and irrelevant alike. As an adaptation signal, it is fundamentally noisy.

2. What to use instead: prediction rank on domain-specific targets

If perplexity is the wrong metric, what is the right one? My answer, developed in follow-up work, is prediction rank computed on domain-specific target terms.

The idea is simple. Rather than asking “how well does the model predict the average token in this document?”, we ask “how well does the model predict the vocabulary that is specific and diagnostic of this domain?” For example, a model that ranks backpropagation highly when given a prompt about gradient descent is demonstrating genuine domain knowledge. A model that predicts \n well is not.

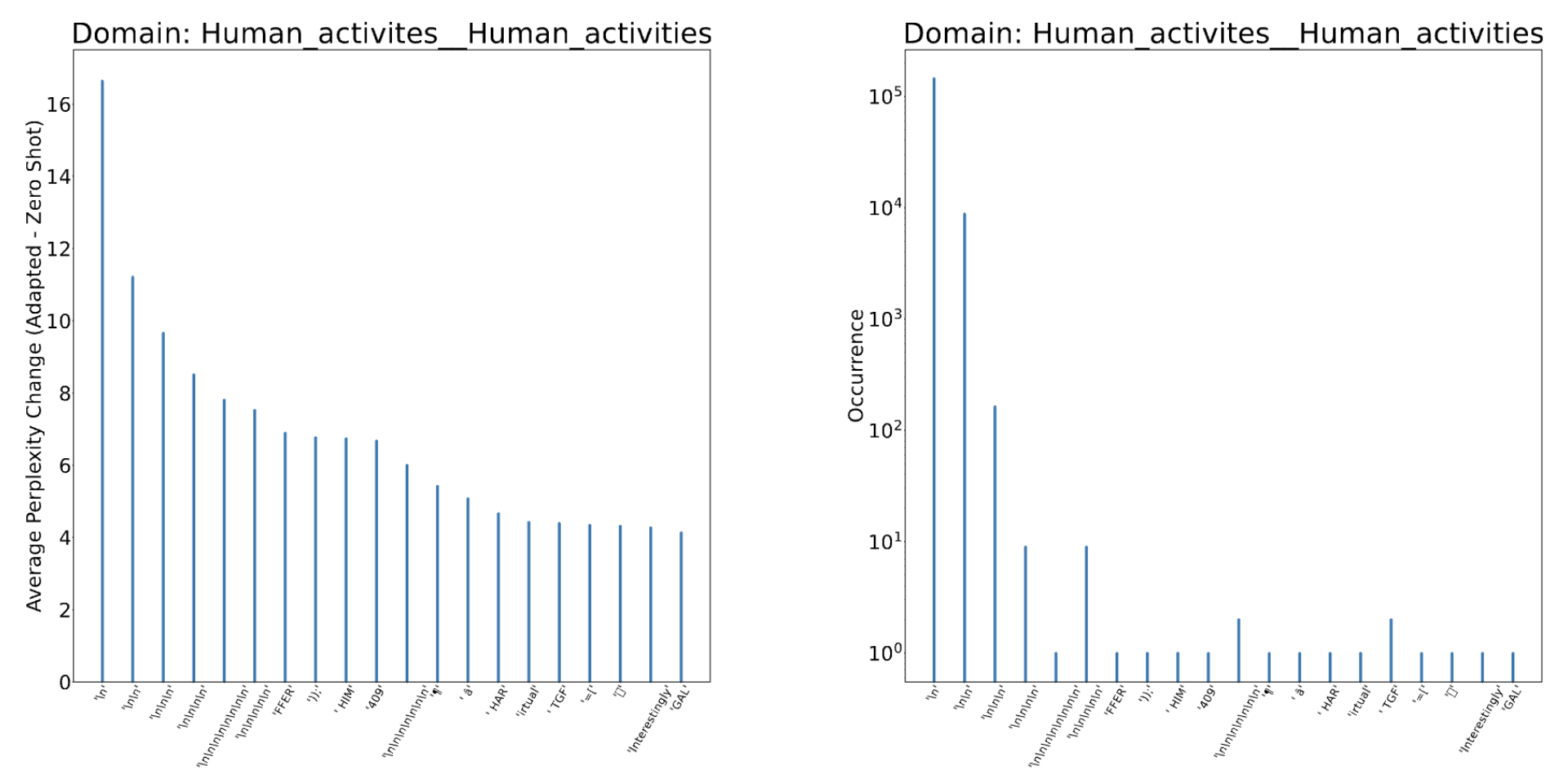

The pipeline to construct domain-specific prompt-target pairs is fully automated: given any raw text corpus, it extracts domain keywords using n-gram statistics and embedding-based deduplication, matches sentences to keywords, and constructs prompt–target pairs where the target is a domain-specific term. For example, for the keyword reinforcement learning, a target might be replay given the prompt “Prior attempts at improving data efficiency in reinforcement learning involved the use of an Experience”. No human annotation, no LLM calls, no contamination risk. Conveniently, the benchmark can be regenerated from fresh data at any time.

We validate this against a manually curated expert benchmark built from a graduate-level deep learning textbook (281 hand-crafted prompt–target pairs). The correlation between our automated pipeline’s model rankings and the expert benchmark rankings is r = 0.99 (p < 0.001). The pipeline is not just convenient, it also agrees with expert human judgment better than multiple choice question benchmarks do (e.g., their predictions substantially change when options are shuffled).

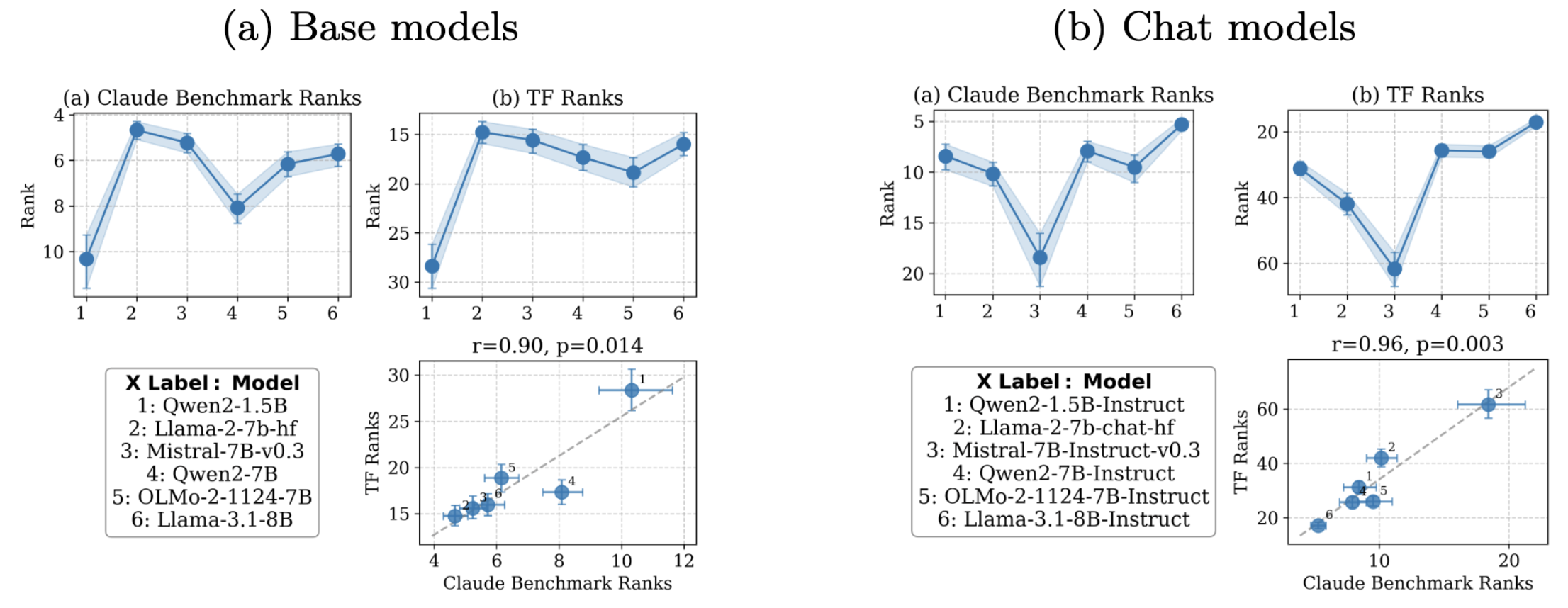

Now let’s see what this metric reveals that perplexity misses, specifically when evaluating adapted models across model families.

Two things stand out here. First, the pipeline ranks correlate strongly with the reference benchmark we generated with Claude for both base and chat models — perplexity holds up for base models but collapses as a signal once you add instruction tuning. Second, base models are almost always better their chat-aligned counterparts on domain knowledge: instruction tuning trades away factual domain knowledge for conversational compliance. This “alignment tax” is real, measurable, and heterogeneous — Llama-2-7B and Mistral-7B show severe drops while Qwen2-1.5B and Llama-3.1-8B are much more robust.

3. Continual pretraining: a better adaptation strategy

So far we have discussed whether to adapt and how to measure adaptation. Now we switch to how to adapt, specifically when you need to specialize a model across multiple domains over time.

Continual pretraining is the process of training a model sequentially on a series of domain corpora, one after another, without retraining from scratch. At each step, the model is updated on a new domain corpus and a checkpoint is saved. The goal is to end up with a model that has accumulated knowledge across all domains while retaining what it learned earlier.

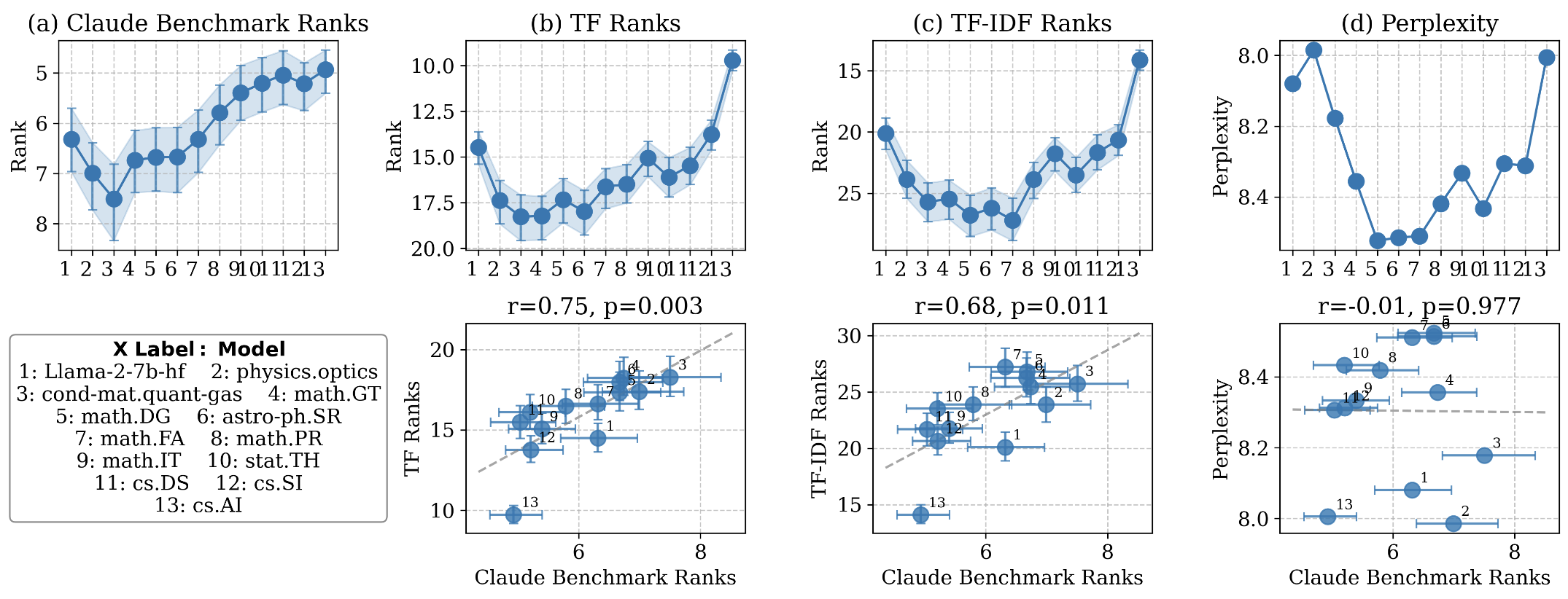

To understand what continual pretraining actually does, consider the following experiment. We take Llama-2-7B and train it sequentially on 12 domains, starting from domains dissimilar to our target (CS.AI) and progressively moving toward it. At each checkpoint, we measure domain knowledge using the prediction rank pipeline from Section 2.

The rank-based metrics tell a coherent story: domain knowledge of CS.AI initially drops as the model trains on unrelated domains, stabilizes in the middle, then improves as training domains converge toward CS.AI. Perplexity shows no such pattern — it oscillates without correlation to what the model actually knows. This is the clearest demonstration I have of why perplexity is the wrong tool for tracking continual learning progress.

3.1. Continual pretraining vs. standalone domain adaptation

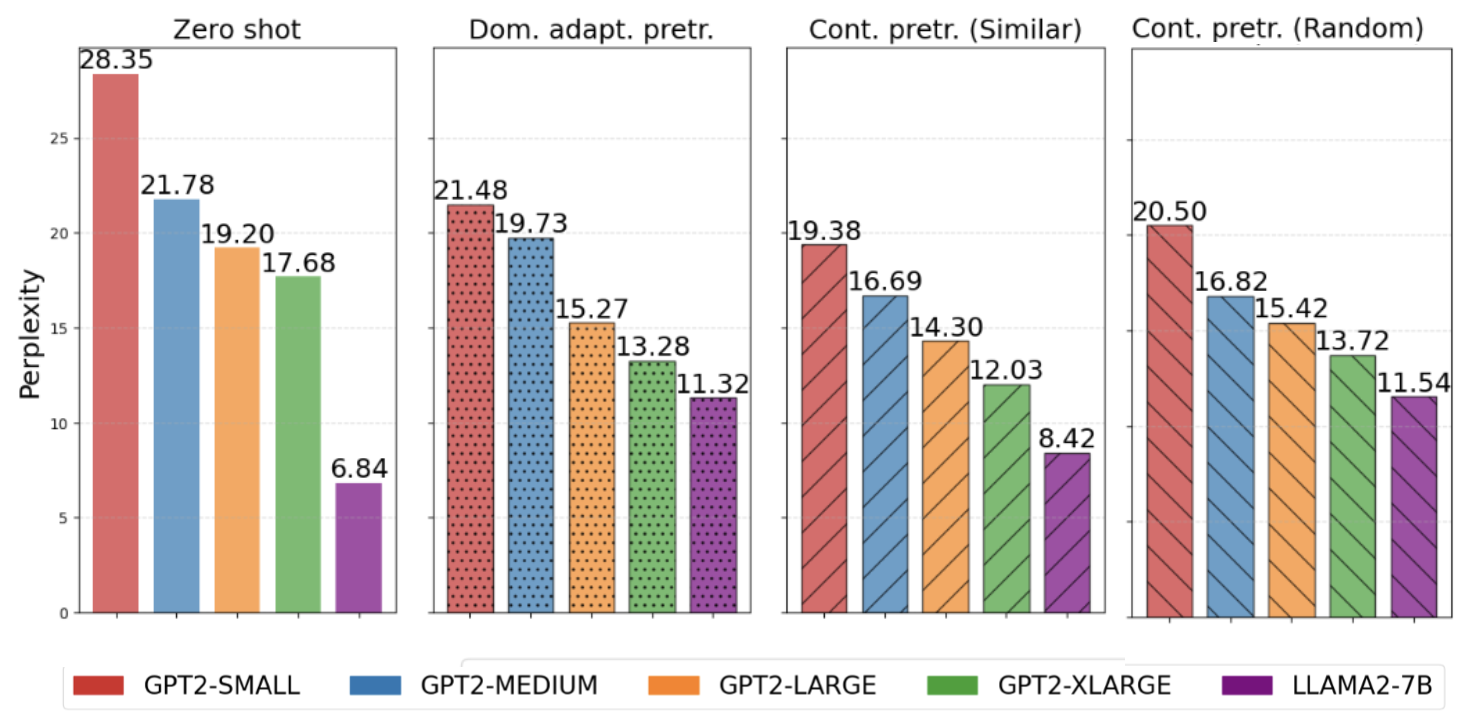

Now for the key practical comparison: is it better to continuously pretrain across many domains, or to adapt directly to your target domain in a single step?

Across GPT-2 model sizes (Small, Medium, Large, XL) and RoBERTa, continual pretraining consistently achieves lower final perplexity on the target domain than standalone domain-adaptive pretraining (DAPT). The margin is substantial for smaller models and narrows but persists for larger ones.

There is an important caveat for large models. Llama-2-7B did not benefit from continual pretraining in our setup. The reason is not architectural — it is a data quantity issue. The individual domain corpora we used (circa. 7B tokens from the M2D2 dataset) are too small to meaningfully shift a 7B parameter model in a single epoch. If your target domain corpus is large enough and your model is large, standalone DAPT may be sufficient. The threshold scales with model size.

3.2. Domain ordering matters (perhaps surprisingly)

When you have a sequence of training domains, does the order matter? Yes, substantially. We compared two orderings: similar-order (semantically related domains follow one another) and random-order (domains are shuffled).

The counterintuitive finding: random domain ordering leads to better final perplexity and less forgetting in the general case. When the model moves between very different domains, the parameter updates are more varied and seem to produce broader, more transferable representations. Gradients from dissimilar domains are less likely to interfere destructively with each other.

Similar-order training wins in one specific scenario: when you are deliberately building a domain expert and care about specialization over generality. If your final training domain is your target and all preceding domains are closely related to it, the model specializes tightly. Whether that is desirable depends entirely on your downstream use case.

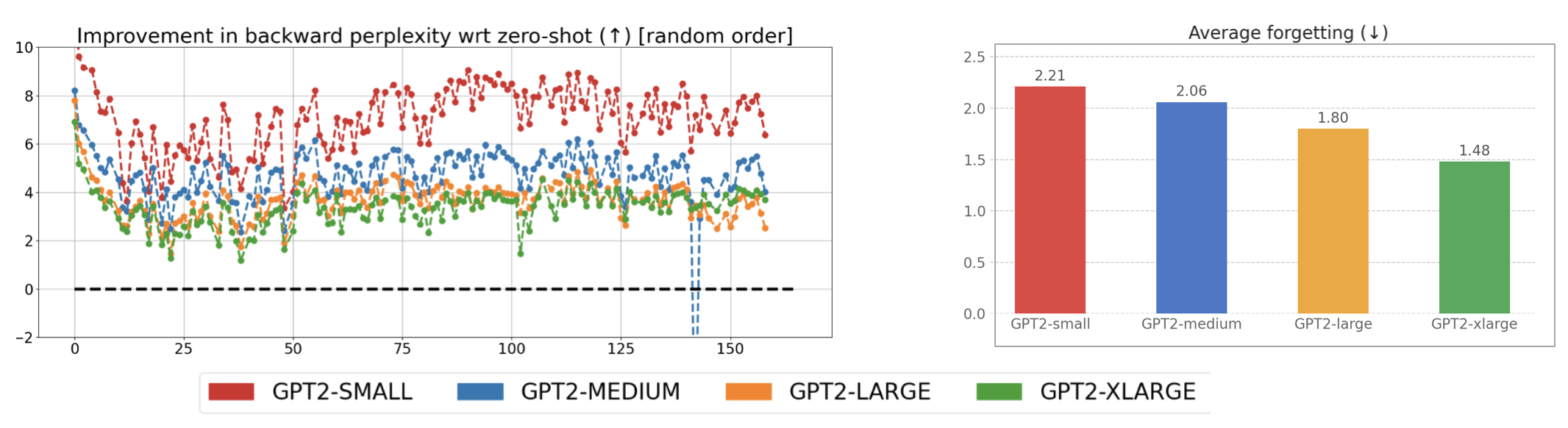

3.3. Smaller models: faster learners, faster forgetters

One finding that carries immediate practical weight: smaller models show the highest rates of both learning and forgetting. When a small model (GPT-2 Small or Medium) is trained on a new domain, it adapts rapidly — but it also loses prior domain knowledge faster than larger models. Larger models adapt more slowly but retain more of what they previously knew.

4. A decision checklist

Putting everything together, here is how I now approach domain adaptation decisions:

-

Measure distributional distance first. Compute MMD or Fréchet distance between your target domain and the base model’s pretraining data (if known). If distance is low — your domain is generic, web-like, or similar to Wikipedia — standalone adaptation will likely hurt more than help.

-

Use prediction rank, not perplexity, to evaluate. Build a small domain-specific benchmark using our pipeline described above. Track model rankings rather than aggregate perplexity. This will correctly identify when adaptation has improved genuine domain knowledge versus when it has just shifted the distribution of function tokens.

-

Prefer continual pretraining over standalone domain adaptation when you have access to multiple related corpora and are working with models up to ~1.5B parameters. The multi-domain scaffold consistently improves final domain performance.

-

Randomize domain order unless you are deliberately building a narrow specialist. For general-purpose accumulation of domain knowledge, shuffled training leads to better transfer and less catastrophic forgetting.

-

Check alignment tax if using a chat model. Base models consistently outperform their chat-aligned counterparts on domain knowledge tasks. If your application is knowledge retrieval or completion rather than conversation, consider whether you need instruction tuning at all — or at least measure what it costs you.

This post synthesizes findings from two collaborative papers: Adaptation Odyssey in LLMs: Why Does Additional Pretraining Sometimes Fail to Improve? (EMNLP 2024) and Investigating Continual Pretraining in Large Language Models: Insights and Implications (TMLR 2025). The evaluation pipeline described in Section 2 is part of ongoing work.